If you are involved in research, even to a small extent, you may benefit from attending training offered by the Library Research Services team.

On the 12th February 2026, between 11:00 and 13:00, there will be an online, practical training workshop for research data management. Attended by over 60 researchers in the last year, ‘Introduction to Writing a Data Management Plan’ is suitable for colleagues new to research (in various roles) and for those of you who may wish to refresh their memory and renew their day-to-day data practices.

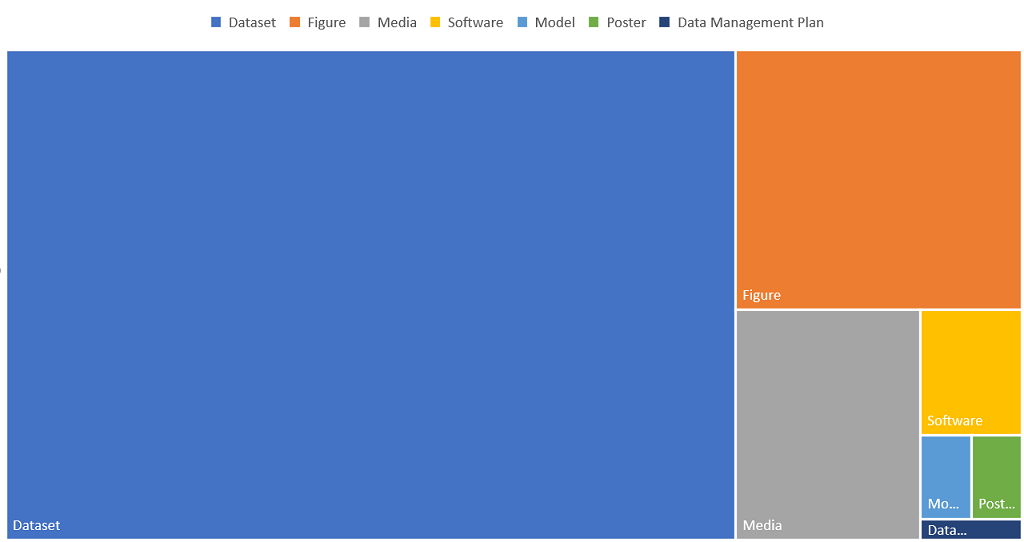

Suitable for all disciplines, this session encourages you to think about what underpins, helps others understand, or validates your research claims and publications. It will include a live demonstration of DMPonline, a web-based tool designed to help you write, or update, your data management plan and you will have the opportunity to start outlining your own plan. We will provide considerations for the individual sections of a data management plan (DMP):

- Outlining and detailing your data.

- Data collection methods, consistency and quality assurance.

- Metadata and documentation. How will you ensure your continuous understanding of your own work. How will others be able to make sense of it?

- Ethical and legal considerations.

- Short-term, active project storage.

- Long-term storage, sharing and preservation. This will include information about the university’s repository, finding alternative repositories and staying compliant with funder requirements.

These training sessions are run a few times each academic year and we try to provide a mix of in-person and online training. For more information about the support we provide, please visit our website.

Coincidentally, this training session will take place during everyone’s favourite celebration, Love Data Week. So, what better time is there to think about your research data?

You can also explore other Library Research Services training sessions.