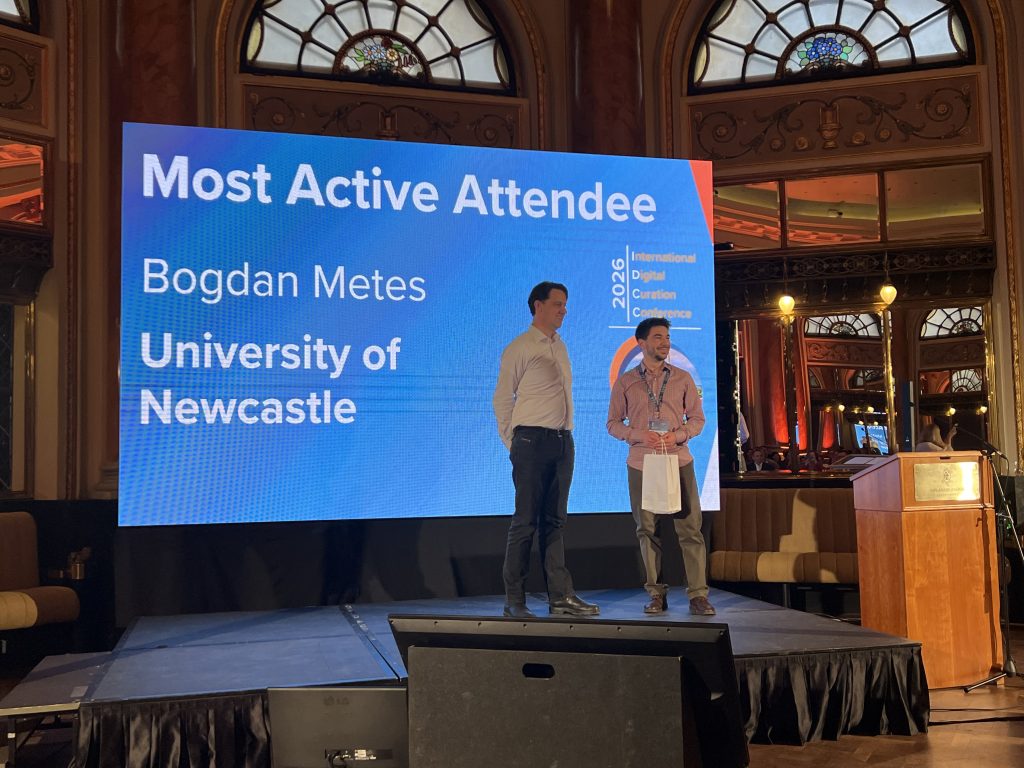

Earlier this year, I was very fortunate to have the opportunity to attend the 20th International Digital Curation Conference (IDCC) in Zagreb, Croatia. The IDCC “is an established annual event with a unique place in the digital curation community, reaching out to individuals, organisations and institutions across all disciplines and domains involved in curating data and providing an opportunity to get together with like-minded data practitioners to discuss policy and practice” (Digital Curation Centre, 2026).

It was particularly humbling and inspiring to see more than 200 colleagues from institutions across the world who proactively try to improve not only open research practice, but the support we give researchers in engaging with these practices. The theme for this year’s event was AI, austerity, and authoritarianism: contemporary challenges in digital curation. The posters and presentations are openly available in the IDCC26 Conference Materials Zenodo collection.

This year, the keynote talks were very inspiring. During the opening talk, Antica Čulina spoke passionately about open research practices such as preregistration, open data, open code and preprints. It was discussed whether they can be truly and sustainably achievable, while considering the infrastructure necessary, the culture supporting them and the incentives that would stimulate engagement.

The closing talk encouraged librarians and other people who curate knowledge to be resilient when facing difficult times, funding uncertainty and authoritarianism. Lynda Kellam and Mikala Narlock delivered a powerful talk about the Data Rescue Project, reminding us of our individual and collective responsibilities as curators or creators of knowledge. It was suggested that we may need to change the way we work. They advocated for “embracing redundancy” of files and that duplicating work and having more than two back-ups can sometimes prove beneficial.

Automation

Using new technologies to streamline processes

As you can probably imagine, many sessions discussed making the work of data curators, or researchers, more efficient through automation, either with innovative programs, or with AI. Regarding the use of AI, it was questioned whether existing open-source or commercial tools should be trusted. As an alternative, the use of in-house AI tools was also discussed. One such talk among many was Enhancing the Benefits of Machine-Actionable DMPs with Generative AI.

Who will automation help?

A matter for debate is whether using such tools would actually save us time, or simply change our process of ensuring that the output is sufficiently robust. Who would it even save time:

- the person writing a data management plan, with the use of AI; or using AI to review their own plan before a submission?

- or the person reviewing it?

Avoiding duplication

Researchers are busy people. A preregistration, ethical review and data management plan, often contain the same pieces of information that were used in a grant application. Are researchers expected to rewrite the same information? Interoperability of files and systems, and the capability to harvest relevant information for the right purpose, were recommended as improvements to current processes. Colleagues from the Eindhoven Institute of Technology described a tool that extracts structured metadata based on a user-defined schema, from research proposals, to populate data management plans.

Other tools

However, automation doesn’t always require AI. One informative talk delivered by a colleague from the UK Centre for Ecology & Hydrology (UKCEH), who host the Environmental Information Data Centre (EIDC), showcased a tool they designed to help check research data compliance with the FAIR principles. The File Check Assistant “tests CSV data files for basic principles of re-usability, such as file structure and encoding, and helps guide users towards good practice”. This sounds particularly useful for large files as it can quickly identify if values are missing or are out of the defined range. While this tool was created to tackle issues with one specific type of file, it made me dream of tools that could help with a variety of other datasets: from missing metadata, to checking if interview transcripts are sufficiently desensitised.

Experts, communication and silos

The academic world is a large community, and each university is a community itself. Within a university there are many specialised sub-communities. They work independently from each other and develop their expertise in isolation. They are known as ‘silos’. Researcher silos are unique in their needs, and specialised in their solutions and protocols. Consequently, their research data practices will be unique from planning, to documenting, to sharing.

Similarly, professional services teams who support research are also siloed and focus on their individual areas of expertise. Among such teams, colleagues responsible for research data management offer general advice intended to be relevant to as many researchers as possible.

Colleagues from the Swedish University of Agricultural Sciences (SLU) advocated for better collaboration between academic and professional services silos. The SLU solution involved widening the use of their library’s online enquiry service, so that other university teams would also receive relevant queries through it. This facilitated smarter collaboration and knowledge sharing between support teams. Sharing knowledge and tailoring existing solutions to help others can be powerful tools in overcoming challenges and resource shortages with the aim of making our research outputs FAIR.

Data management plans (DMPs)

DMPs are always mentioned when two research data professionals are in the same room. This might sound cynical, or be perceived as a joke, but they are mentioned because they are a crucial part of the research process, they are time consuming and difficult to get right. It was eye-opening to see how differently they can be approached by different universities. Some of us strongly encourage their use. Others mandate them for all research projects. In some institutions, a small number of support staff, like me, review them on request. In other places, this responsibility is shared to supervisors in the case of postgraduate research projects.

An entirely different element is the choice of tools and mechanisms that we use to write and review DMPs, and I won’t refer to genAI tools this time. At Newcastle University, we use DMPonline, employing a generic template from the Digital Curation Centre, along with many funder-specific templates, each with detailed guidance. Some dynamic alternatives to DMPonline exist, giving users templates that tailor themselves based on previous answers. In theory, this should ensure that questions are as relevant as possible to researchers that might struggle with static templates. The alternatives discussed are Data Stewardship Wizard and FAIR Wizard. The former is an open-source tool, whereas the latter uses the former’s engine.

Another interesting approach employed templates provided in text documents. The documents are simple and are not overwhelming, as the guidance exists separately, on the website. Researchers are given the opportunity to request feedback for their DMPs by submitting a request form via the library’s online enquiry service. All the options above are interesting and have their merits. This made me reflect on whether one of them would be better at bringing together advice, support and guidance, while maintaining relevancy to as many experts as possible, in a variety of disciplines. What is the best way to incorporate definitions, encourage reflection and promote inclusion for colleagues who may not be familiar, or comfortable, with the language that has become standard in research data management circles?

Other takeaways and applications

Data access statements that use standardised language

Something close to my heart (or my day-to-day job) is the transparency of data access statements. It was emphasised by colleagues at the University of Bristol how important it is to have data access statements that use standardised language. It was suggested that we should use a taxonomy similar to CRediT. At a local level, I will continue to highlight the importance of data access statements, the need for enhanced clarity regarding the level of access to the data, persistent identifiers (such as DOIs) and additional information needed for accessing research data. Encouraging the research community to have clearly worded data availability statements in publication metadata will be a great step forward in enhancing data FAIRness and therefore, research transparency.

Key decision moments in the research data lifecycle

Another takeaway is João Aguiar Castro’s work, which aims to “enhance the usability, interpretability, and long-term value of DMPs” for all involved in research. João’s framework inspired me to assess the messages and guidance I provide in training and one-to-one consultation meetings regarding the level of detail required for a data management plan. While most of us already strive for this, using clearer language and digging deeper into researchers’ needs at different stages of their projects will most likely lead to more relevant conversations and useful DMPs.

Networking and community

The IDCC was such a welcoming event. The attendees are all open to sharing knowledge and improving research transparency: it comes with the job description. The community is one that relies in cross-institution collaboration because at our respective institutions there are so few of us. And we are all continuously learning.

I am still working through my notes from the conference and I hope, in the near future, to arrange meetings with some of the colleagues I met. We are already exchanging ideas via LinkedIn or email, and some attendees have been sending me additional information that I had asked for.

Zagreb

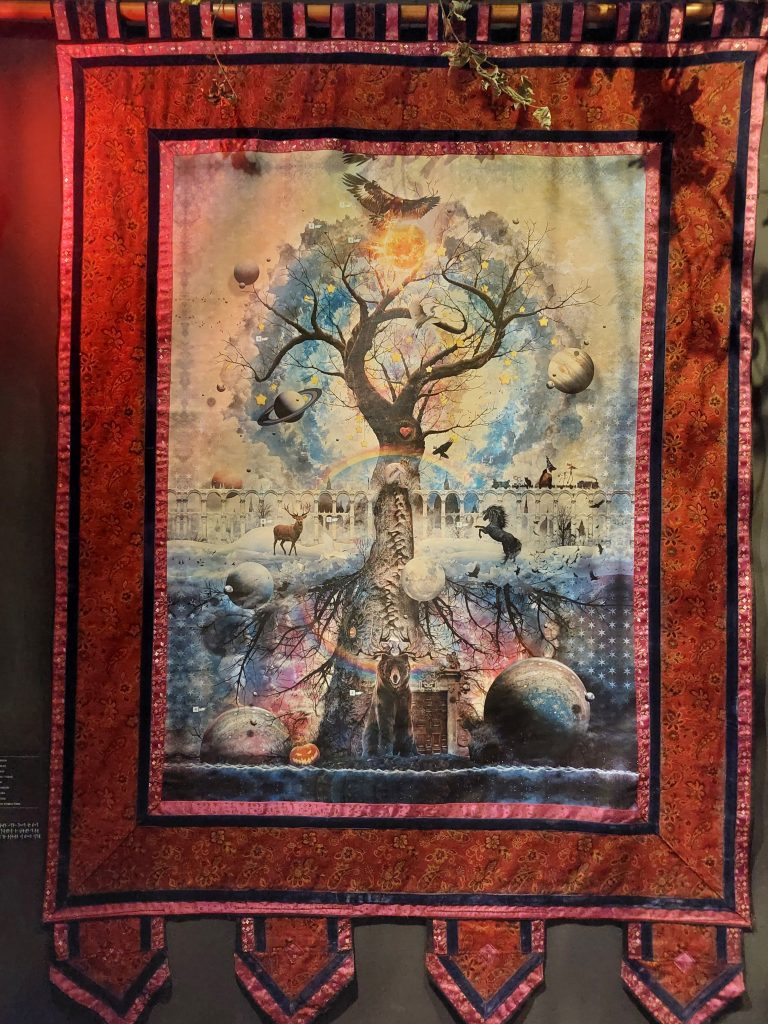

I was very lucky to spend some time as a tourist in Zagreb as well. My odd brain found an interesting link between the theme of the IDCC and Zagreb, a city full of museums, some of which rather serious, others rather whimsical. It felt serendipitous that this year’s event, focused on contemporary challenges in digital curation took place in a city that is so focused on remembering and preserving the knowledge of the past. One of the several museums I visited was truly memorable. The Museum of Lost Tales provided a unique look into the folklore and mythology of Croatia. Using allegory in the beginning, it showcased a fantastic creation myth that was sometimes similar to Norse mythology. For example, there is a world tree (Stablo svijeta), and a fierce and formidable warrior god of thunder (Perun), with red hair and beard.