In semester 2 of the 2016/2017 academic year we ran our first ever Numbas final exam at Newcastle, for a module in quantitative methods for Business School students.

We have run locked-down exam sittings before for in-course assessment, in particular here in the School of Mathematics, Statistics and Physics, where we host a diagnostic test for all stage 1 students. However this was the first as an official final exam and was non-trivial, with over 300 students across 4 computer clusters.

The module in question introduces students to the techniques of collecting, summarising and analysing data which are necessary for modern business decision making. As such, most of the assessment relies on students entering numeric answers, or interpreting the results in the form of a multiple-choice question.

Given the type of questions required, the exam is an obvious candidate for electronic assessment. But why use Numbas?

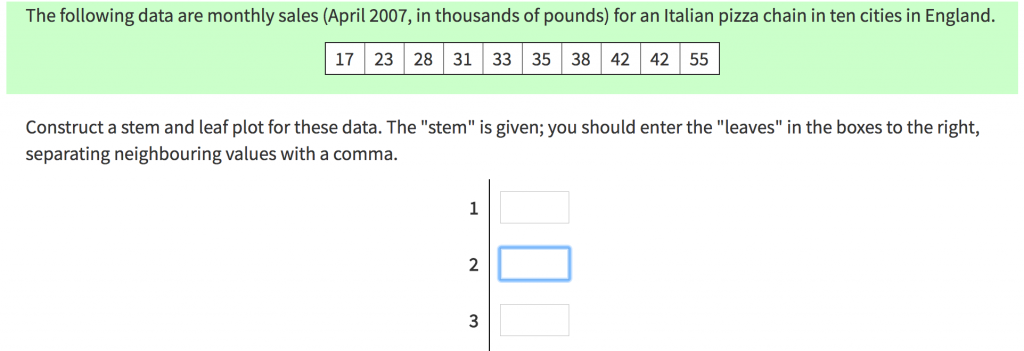

One reason is the superior user-experience provided by the Numbas interface. Consider the following question from the specimen exam, in which students are asked to complete a Stem and Leaf plot. For those of you not mathematically inclined, this involves taking a list of numbers and grouping them.

In a written exam the marker could process the student’s answer easily, but it is not trivial to mark this automatically. What if the student puts the numbers in an illogical order, or adds a space but no comma? Numbas handles all of this without over-burdening the students with the instructions that would otherwise be required.

There was a fairly significant increase in marks on the 2015/16 academic year, when the test was run through Blackboard and contained very similar questions. Though there is no conclusive evidence, one theory is that the students were more comfortable inputting answers into the same system as their mock exam and in-course assessment.

Another significant benefit of using Numbas is the randomisation of the question variables: each student is presented with a unique version of the questions, in this case usually a unique data set. This additional security, in computer clusters not designed for holding examinations, is particularly welcome, given that the answers are very short and would be easy to observe.

The exam was run using the OLAF lockdown browser, following the standard online exam procedures. The test simply opening as a Numbas exam, rather than a Blackboard item.

We were very pleased with our first run of a final exam. If you have any questions about running something similar in your course then please don’t hesitate to get in touch.

Numbas is developed by the e-learning unit in the School of Mathematics, Statistics and Physics. If you have any questions or are interested in using Numbas in your course please Chris Graham at christopher.graham@ncl.ac.uk