L. Ya. Rosenblum and A.V. Yakovlev.

Signal graphs: from self-timed to timed ones,

Proc. of the Int. Workshop on Timed Petri Nets,

Torino, Italy, July 1985, IEEE Computer Society Press, NY, 1985, pp. 199-207.

https://www.staff.ncl.ac.uk/alex.yakovlev/home.formal/LR-AY-TPN85.pdf

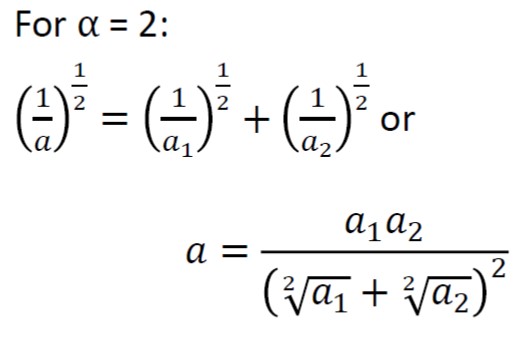

A paper establishing interesting relationship between the interleaving and true causality semantics

using algebraic lattices. It also identifies an connection between the classes of lattices and the property

of generalisability of concurrency relations (from arity N to arity N+1),

i.e. the conditions for answering the question such as,

if three actions A, B and C are all pairwise concurrent, i.e. ||(A,B), ||(A,C), and ||(B,C), are they concurrent “in three”, i.e. ||(A,B,C)?

L. Rosenblum, A. Yakovlev, and V. Yakovlev.

A look at concurrency semantics through “lattice glasses”.

In Bulletin of the EATCS (European Association for Theoretical Computer Science), volume 37, pages 175-180, 1989.

https://www.staff.ncl.ac.uk/alex.yakovlev/home.formal/lattices-Bul-EATCS-37-Feb-1989.pdf

Paper about the so called symbolic STGs, in which signals can have multiple values (which is often convenient for specifications of control at a more abstract level than dealing with binary signals) and hence in order to implement them in logic gates one needs to solve the problem of binary expansion or encoding, as well as resolve all the state coding issues on the way of synthesis of circuit implementation.

https://www.staff.ncl.ac.uk/alex.yakovlev/home.formal/async-des-methods-Manchester-1993-SymbSTG-yakovlev.pdf

Paper about analysing concurrency semantics using relation-based approach. Similar techniques are now being developed in the domain of business process modelling and work-flow analysis: L.Ya. Rosenblum and A.V. Yakovlev. Analysing semantics of concurrent hardware specifications. Proc. Int. Conf. on Parallel Processing (ICPP89), Pennstate University Press, University Park, PA, July 1989, pp. 211-218, Vol.3

https://www.staff.ncl.ac.uk/alex.yakovlev/home.formal/LR-AY-ICPP89.pdf

Моделирование параллельных процессов. Сети Петри [Текст] : курс для системных архитекторов, программистов, системных аналитиков, проектировщиков сложных систем управления / Мараховский В. Б., Розенблюм Л. Я., Яковлев А. В. – Санкт-Петербург : Профессиональная литература, 2014. – 398 с. : ил., табл.; 24 см. – (Серия “Избранное Computer Science”).; ISBN 978-5-9905552-0-4

(Серия “Избранное Computer Science”)

https://www.researchgate.net/…/Simulation-of-Concurrent-Processes-Petri-Nets.pdf